Takeaways: Software Heritage Symposium and Summit 2026

PARIS January 28, 2026 — At the Software Heritage 10th Anniversary Symposium & Summit at UNESCO, and the signal was clear: source code is no longer just a technical byproduct—it is the primary cultural record of the 21st century. We are moving past the black box era to treat code as the shared history it actually is.

Highlighting this shift, Tawfik Jelassi (UNESCO Assistant Director-General for Communication and Information) announced a renewed partnership with Inria and Software Heritage, launching a new five-year program focused on digital public goods.

“UNESCO considers software source code to be more than a technical infrastructure; it is knowledge, it is memory, and it is a component of our shared documentary heritage with universal value,” Jelassi noted in his opening remarks. You can view all the sessions from the afternoon on YouTube.

“Without preservation, societies lose their ability to understand how systems were built, why decisions were made, and how knowledge evolved over time. Preservation, therefore, is not about looking backwards; it is about enabling continuity. When software is preserved, knowledge remains verifiable. When software is accessible, learning becomes possible. When software is documented, innovation can build on what already exists. This is especially important for communities with fewer resources, where losing digital knowledge means losing opportunities.”

The essentials: Key insights at a glance

You can check out recaps from the individual sessions, but here are a few highlights:

In the panel on “Transparent AI for Inclusion,” the conversation shifted from high-level politics to the gritty reality of building sovereign technology. While the panel included voices from across France’s AI leadership and Brazil’s ministry, Hakim Hacid, Chief Researcher at the UAE’s Technology Innovation Institute, offered a candid look at the lessons learned from building the Falcon models. He admitted that the journey began with “big mistakes” before the team pivoted toward smaller, efficient models that could run on a simple laptop—an essential move for true global inclusion.

For Hacid, the push for open-source AI is less about altruism and more about the three pillars of modern sovereignty: data, infrastructure, and energy. He highlighted the specific challenge of preserving underrepresented languages like Arabic, which often shrinks to just 10% of its original volume after the “cleaning” process required for AI training. By releasing open-weight models, the goal is to prevent a future where nations are forced to outsource their data and culture to a “black box” they don’t control.

“Sovereignty means each nation should be capable of controlling the AI they consume. If you send your data to a model but don’t know what happens to it, you aren’t sovereign. But it goes beyond the code—if you don’t control the infrastructure and the energy required to run it, ‘Sovereign AI’ is just a dream.”

Roberto Di Cosmo concluded by noting that Software Heritage never explicitly planned to enter the AI space—it happened because the industry arrived at their doorstep. He emphasized that the archive is now leveraging this position to ensure the technology develops responsibly. “We didn’t set out to do AI, but it happened,” Di Cosmo said. “Now, we are working with companies that adhere to our principles,” starting with the Statement on LLMs published in 2023.

Building on the theme of digital sovereignty, the session on “Open Infrastructures for Software as a Digital Public Good” shifted the focus from AI models to the underlying plumbing of modern science. While representatives from the UN and European research bodies discussed high-level frameworks, Eurico Wongo Gungula, Rector of Oscar Ribas University and UNESCO Focal Point for Angola, grounded the conversation in the practical hurdles of the Global South.

For Gungula, software is no longer a peripheral tool; it is a “critical global infrastructure” that serves as the foundation for national knowledge circulation and informed policymaking. He detailed Angola’s strategic pivot over the last five years toward a model where software is treated as a sustainable public good. Central to this effort is the recently launched National Repository for Open Science, a technical backbone designed to ensure that research and data remain accessible rather than locked behind proprietary paywalls.

However, Gungula was refreshingly candid about the “gritty reality” of implementation. While public institutions and the Ministry of Higher Education have aligned around open-source values, the private sector remains a significant hurdle. He noted a persistent “understanding gap,” where private companies often view open initiatives with skepticism or see them as a threat to traditional commercial models.

“When we go to a private company, the understanding they have is totally different… we are working on the construction of a national network, but it is not always easy.”

Despite these frictions, Gungula’s message was one of progress. By participating in global scientific dialogues over the last two years, Angola is bridging the gap between local infrastructure and international standards. His intervention served as a reminder that for a Digital Public Good to be truly “global,” it must survive the transition from public policy to private sector adoption.

During the “Supporting the Digital Commons” session, Dario Taraborelli (CZI) offered a blunt blueprint for stabilizing open science: stop treating software as a research “afterthought.”

By shifting focus from new projects to the human maintainers behind “household” libraries like Python, R, and Julia, CZI spent $15 million to solve the chronic bottlenecks of volunteer-led code. Their strategy centers on a multi-funder model—partnering with giants like Wellcome Trust—to prove that digital sovereignty is only as strong as the “invisible” labor beneath it.

“How can we think about designing a program that is not designed for maintainers as an afterthought after research, but tailored to the needs of maintainers… so we can do something about the bottlenecks in this space?”

Taraborelli’s perspective underscores a vital truth: digital sovereignty and national repositories are only as strong as the code they are built on. By funding the “invisible” work of maintenance, the scientific community ensures that the infrastructure of tomorrow remains open, secure, and—most importantly—functional.

The announcements

The Sovereign Tech Agency and Software Heritage announced a collaboration to ensure that critical open-source projects supported by the Sovereign Tech Fund are systematically archived, establishing permanent preservation as a standard for these projects.

“Open source maintainers build the foundations of our digital world, often without institutional support or long-term preservation guarantees,” said Adriana Groh, managing director of the Sovereign Tech Agency. “By partnering with Software Heritage, we ensure that the projects we invest in remain accessible, traceable, and preserved for the long term—protecting both the code and the story of its development.”

Mirrors

IMDEA Software has partnered with Software Heritage and Inria to establish a mirror in Spain. One of seven regional research institutes in Madrid, the IMDEA Software Institute specializes in software science and technology. Its mission is to develop methods and tools for building safer, more reliable, and more efficient software.

With the creation of this mirror, Spain joins the international network of distributed nodes, adding to existing mirrors in Italy (ENEA) and Greece (GRNET), and to a forthcoming mirror in Germany (UNIDUE).

More on the mirrors page.

Software Heritage has signed Memoranda of Understanding with the Computer History Museum in Mountain View, California, and the Deutsches Museum in Munich, Germany. Stay tuned for more details on these new collaborations.

From pixels to paperweights

Beyond the high-level debates on sovereignty, the day was marked by a persistent effort to make the intangible nature of source code feel real. This ten-year milestone wasn’t just a celebration of data stored, but of a decade spent making the invisible visible. The digital commons was brought into the physical world through a series of whimsical, tactile experiments—from 3D-printable “source code trees” that visualized the branching history of software to temporary tattoos of the organization logo. It was a reminder that after ten years of growth, code is no longer just a ghostly layer of logic, but a cultural artifact we can touch and carry.

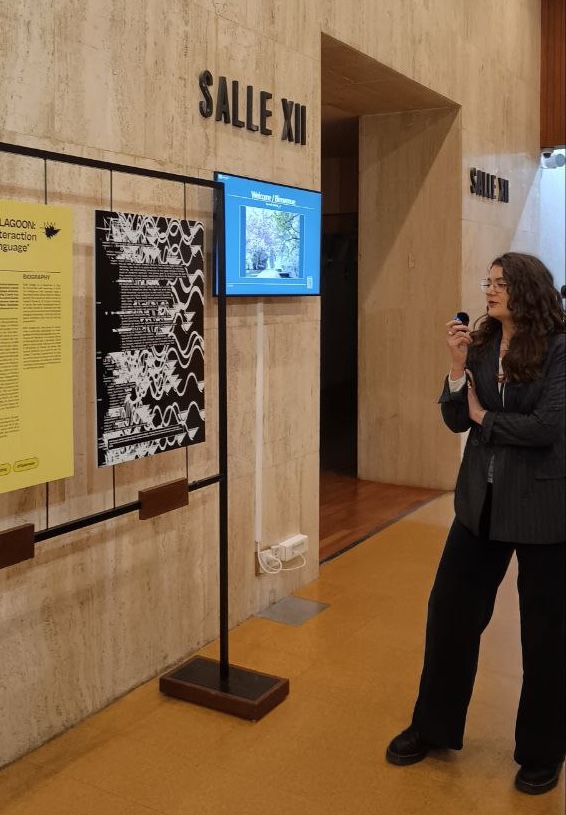

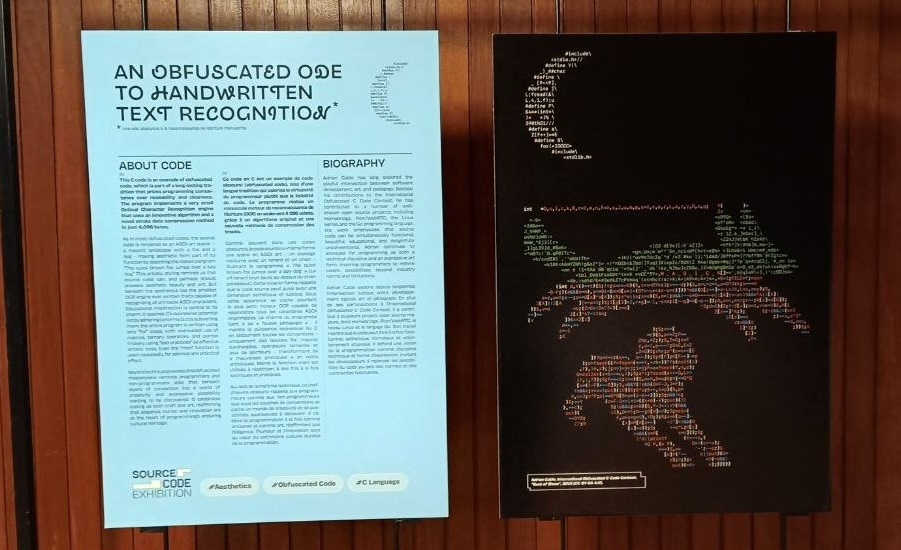

Source Code Exhibition

Just outside the auditorium where the sessions were held, an exhibit brought code into the physical world. Moving beyond the standard tech showcase, the exhibit repositioned source code as the central artifact rather than the background of a digital product. You can check it out online or catch the first public pop-up at the Cité des Sciences et de l’Industrie’s Fablab in March 2026. Entry is free and open to all.

A blueprint for the commons

During the closed morning sessions, we shared a commemorative memento with our sponsors to mark 10 years of Software Heritage. Because this is an open-source project, the design files are available to everyone. You can find the blueprints here to print your own and mark a decade of the commons.

The sentiment of the day peaked when the Software Heritage staff presented the founders with a physical globe—a symbolic nod to a decade spent mapping the world’s digital DNA and a reminder that while the archive lives in the cloud, its impact is firmly grounded in the world we inhabit.

All videos from the sessions are available on our YouTube Channel.

#SWH10